Hey fellow developers 👋, we know that Kubernetes is being adopted by many organisations worldwide in an attempt to simplify orchestration and management of container based applications. AWS has its own version of Kubernetes called EKS where we have the ability to ask for a managed EKS Cluster and AWS will take care of all the underlying patch management, software updates etc of the underlying hardware and software in use.

Prerequisites

- Knowledge of Yaml files.

- Basic understanding of IaC and AWS Cloudformation

- AWS CLI installed in your system.

- Kubectl installed in your system.

Base Infrastructure needed before deployment of EKS Cluster

- An S3 bucket to store cloudformation artifacts. When you run

aws cloudformation deploy...., we need to specify an S3 bucket to store the template in. - A VPC with public and private subnets and at least one security group which allows all outbound access only (Kubernetes Cluster will leverage this security group to talk to the Internet and Managed Node Groups - same security group will be attached to the Worker Nodes allowing communication to the cluster and internet). Find more information on security group considerations here .

- You can choose to create a managed EKS cluster to be Public/Private or both. In this tutorial, we will create the cluster to leverage both public and private subnets so Amazon EKS recommends running a cluster in a VPC with public and private subnets so that Kubernetes can create public load balancers in the public subnets that load balance traffic to pods running on nodes that are in private subnets and we can also access Kubernetes API publicly. Find more information on VPC considerations for EKS here .

Cloudformation template - Managed EKS Cluster

AWS Documentation for deploying EKS Cluster via Cloudformation

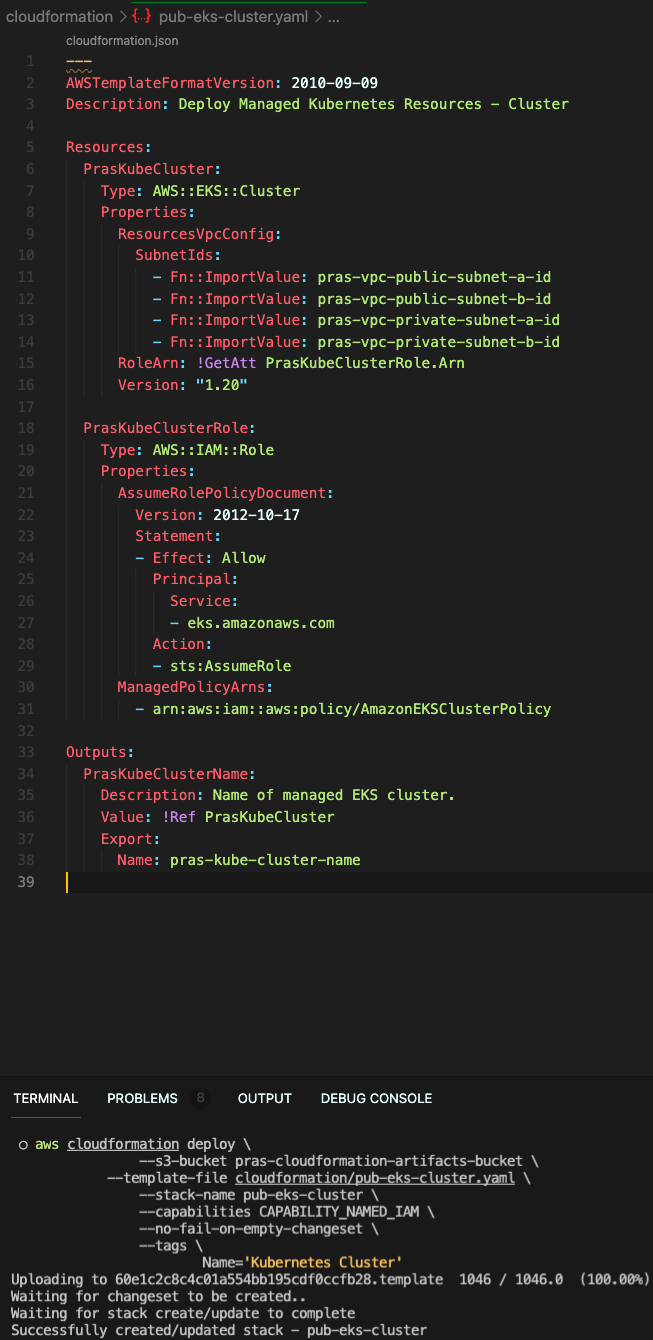

---

AWSTemplateFormatVersion: 2010-09-09

Description: Deploy Managed Kubernetes Resources - Cluster

Resources:

PrasKubeCluster:

Type: AWS::EKS::Cluster

Properties:

ResourcesVpcConfig:

SubnetIds:

## As stated in section above, populate these with your current VPC subnet IDs

- Fn::ImportValue: pras-vpc-public-subnet-a-id

- Fn::ImportValue: pras-vpc-public-subnet-b-id

- Fn::ImportValue: pras-vpc-private-subnet-a-id

- Fn::ImportValue: pras-vpc-private-subnet-b-id

RoleArn: !GetAtt PrasKubeClusterRole.Arn

Version: "1.20"

PrasKubeClusterRole:

## EKS needs permissions to interact with nodes and other AWS Services https://docs.aws.amazon.com/eks/latest/userguide/service_IAM_role.html

Type: AWS::IAM::Role

Properties:

AssumeRolePolicyDocument:

Version: 2012-10-17

Statement:

- Effect: Allow

Principal:

Service:

- eks.amazonaws.com

Action:

- sts:AssumeRole

ManagedPolicyArns:

- arn:aws:iam::aws:policy/AmazonEKSClusterPolicy

Outputs:

PrasKubeClusterName:

Description: Name of managed EKS cluster.

Value: !Ref PrasKubeCluster

Export:

Name: pras-kube-cluster-nameNow that we have a cloudformation template, we can run the following command to create an EKS managed cluster using IaC (AWS Cloudformation)

aws cloudformation deploy \

--s3-bucket pras-cloudformation-artifacts-bucket \ ## as mentioned before, need an S3 bucket to store cloudformation artifacts

--template-file cloudformation/pub-eks-cluster.yaml \

--stack-name pub-eks-cluster \

--capabilities CAPABILITY_NAMED_IAM \

--no-fail-on-empty-changeset \

--tags \

Name='Kubernetes Cluster'Here is a screenshot of how I ran it from my local command line,

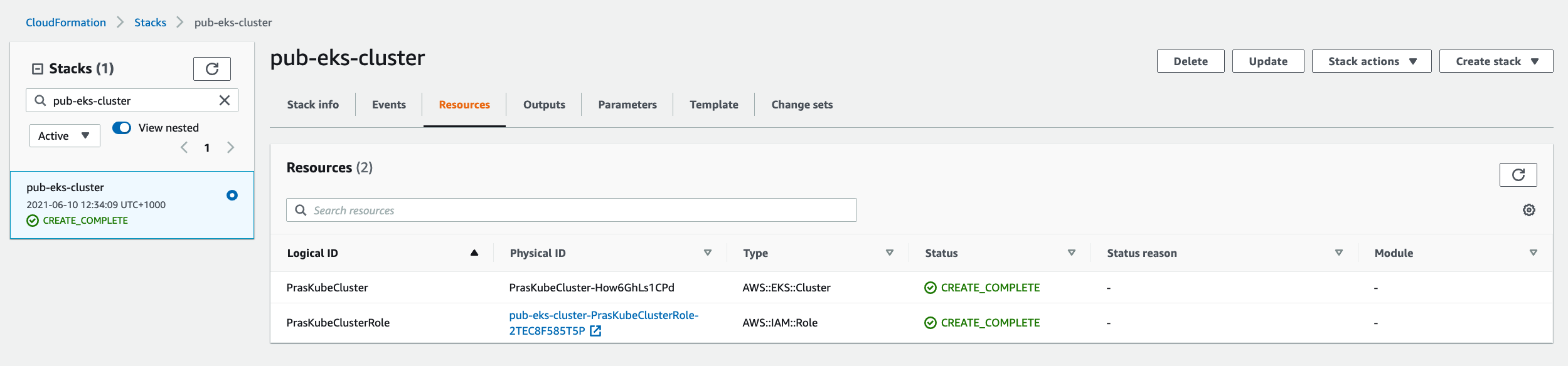

We can login to the AWS Console to see that the Cloudformation stack has been created,

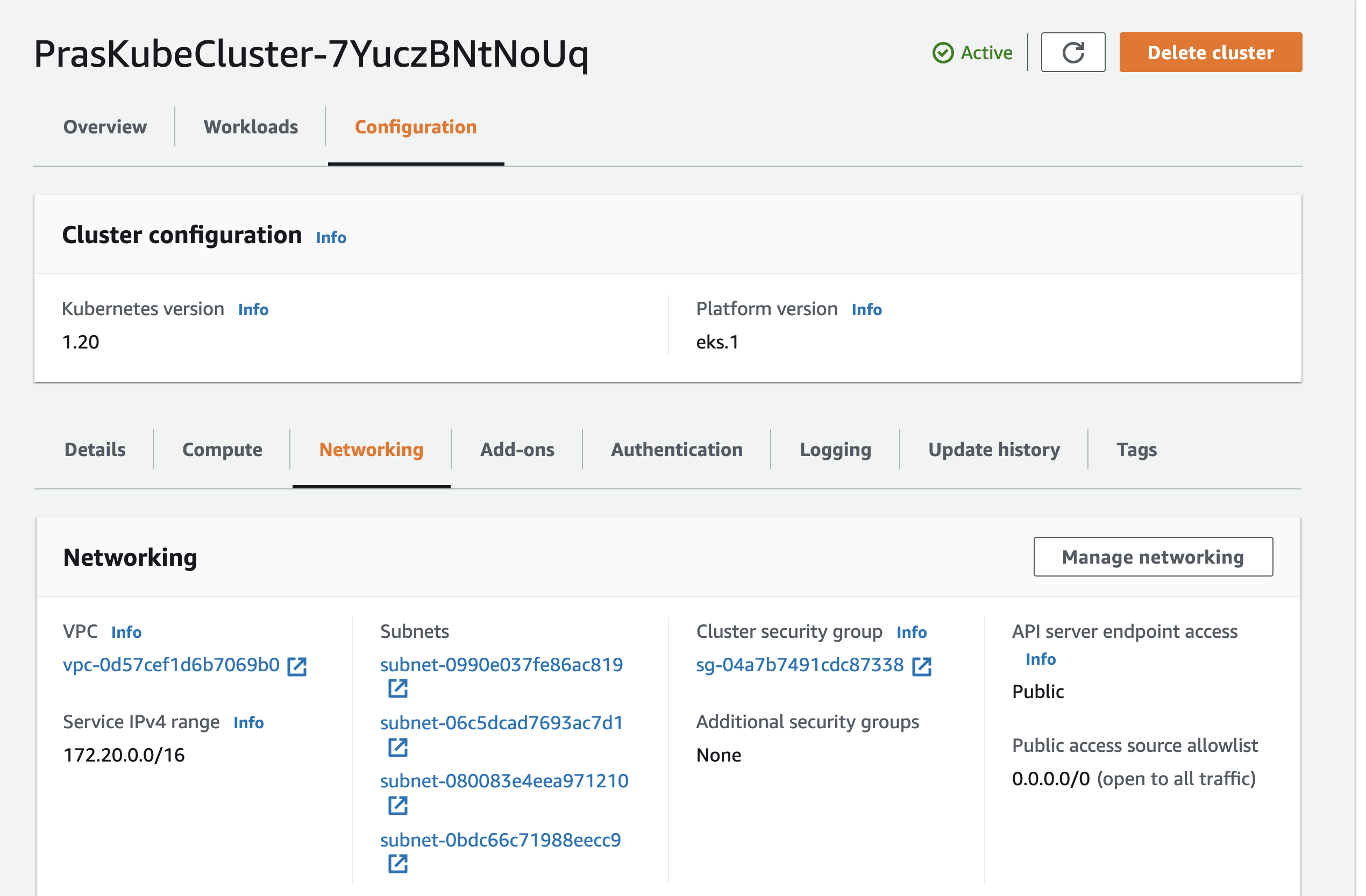

Finally, we can navigate to EKS console to look at the cluster itself,

One thing to notice straightaway is that the API Server is accessible via public and the API server is open to the world with the rule 0.0.0.0/0. Cloudformation for EKS does not yet support access configuration and other networking configurations. I decided to use a python script to change that. The script below will change the access configuration on the cluster to be Public and Private both and also restrict access to my home IP so that only I can access the cluster. Just export EKS_CLUSTER_NAME with the name of the EKS Cluster and run the script which will update EKS Configuration. The script also contains logging configuration, you can choose to ignore it for now, I am logging everything to CloudWatch so I can learn more about how Kubernetes components work.

"""

## 29/05/2021 Cloudformation does not support networking configuration (Public/Private configuration, Source IP whitelist)

## To be deprecated when cloudformation support is available

"""

import boto3

import logging

import requests

import os

logging.getLogger().setLevel(os.environ.get("LOG_LEVEL", "INFO"))

class EksCluster():

def __init__(self, cluster_name: str) -> None:

self.cluster_name = cluster_name

self.eks_client = boto3.client("eks")

def update_cluster_config(self, is_private, is_public=True):

"""

Update Access to the API Server - Choices are Public/Private or both

"""

public_ip= requests.get(r'http://jsonip.com').json()['ip']

logging.info(f"Your Public IP --> {public_ip}")

try:

try:

update_cluster_config = self.eks_client.update_cluster_config(

name=self.cluster_name,

resourcesVpcConfig={

"endpointPublicAccess": is_public,

"endpointPrivateAccess": is_private,

"publicAccessCidrs": [

f"{public_ip}/32"

]

},

)

logging.info(f"Update cluster config response: {update_cluster_config}")

except self.eks_client.exceptions.InvalidParameterException as cluster_update_exception:

logging.error(f"Unable to update eks cluster configuration with error {cluster_update_exception}")

try:

logging_configuration={

"clusterLogging": [

{

"types": [

"api", "audit", "authenticator", "controllerManager", "scheduler"

],

"enabled": True

}

]

}

update_cluster_config = self.eks_client.update_cluster_config(

name=self.cluster_name,

logging=logging_configuration

)

logging.info(f"Update cluster config response: {update_cluster_config}")

except self.eks_client.exceptions.InvalidParameterException as cluster_update_exception:

logging.error(f"Unable to update eks cluster configuration with error {cluster_update_exception}")

except self.eks_client.exceptions.ResourceInUseException as cluster_update_inprogress:

logging.error(f"Unable to update cluster at this time, an update is already in progress: {cluster_update_inprogress}")

if __name__ == "__main__":

instantiate_eks_cluster = EksCluster(os.environ["EKS_CLUSTER_NAME"])

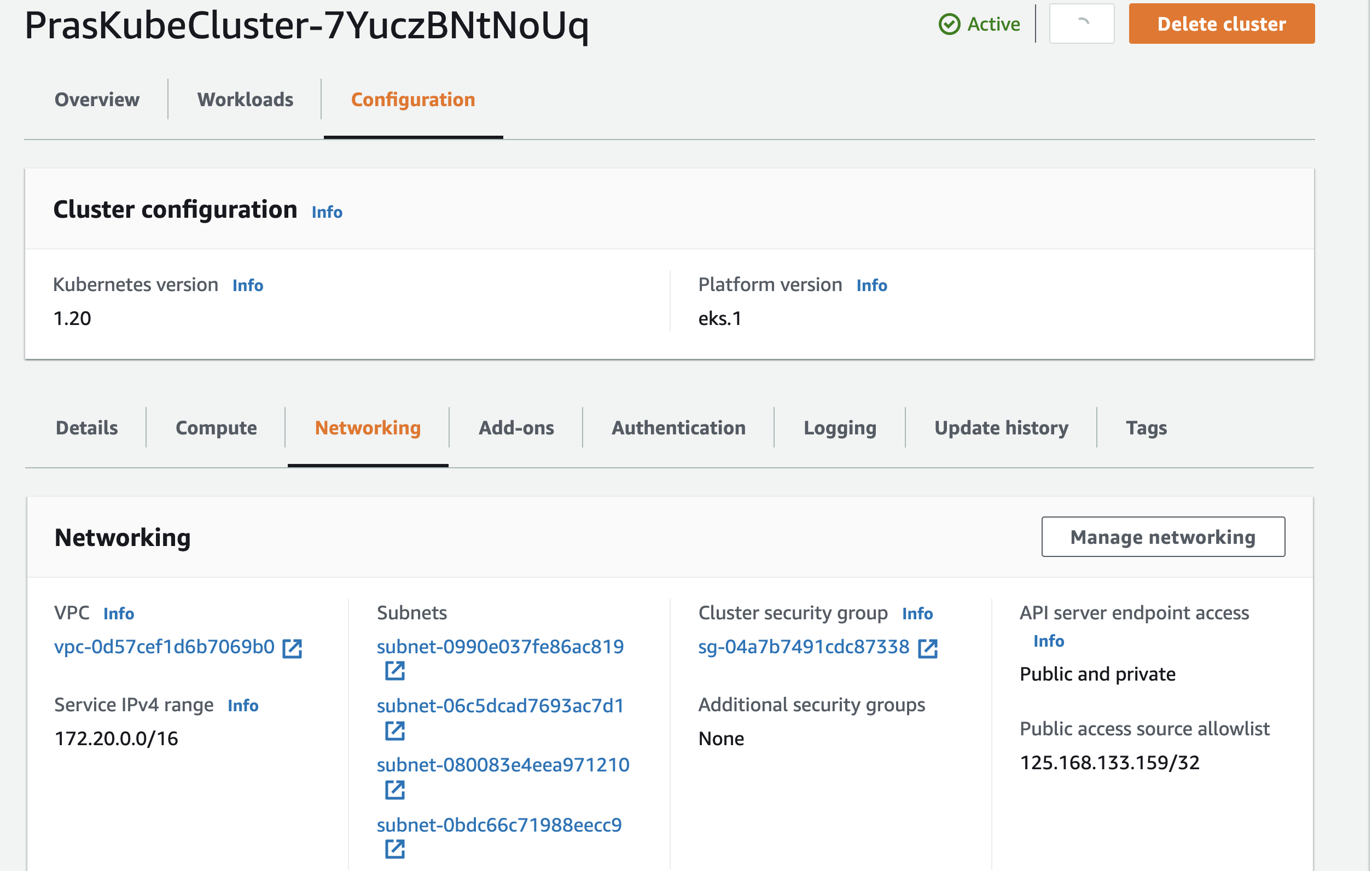

instantiate_eks_cluster.update_cluster_config(is_private=True)We can verify that the access configuration has changed by logging into AWS Console and navigating to EKS Service,

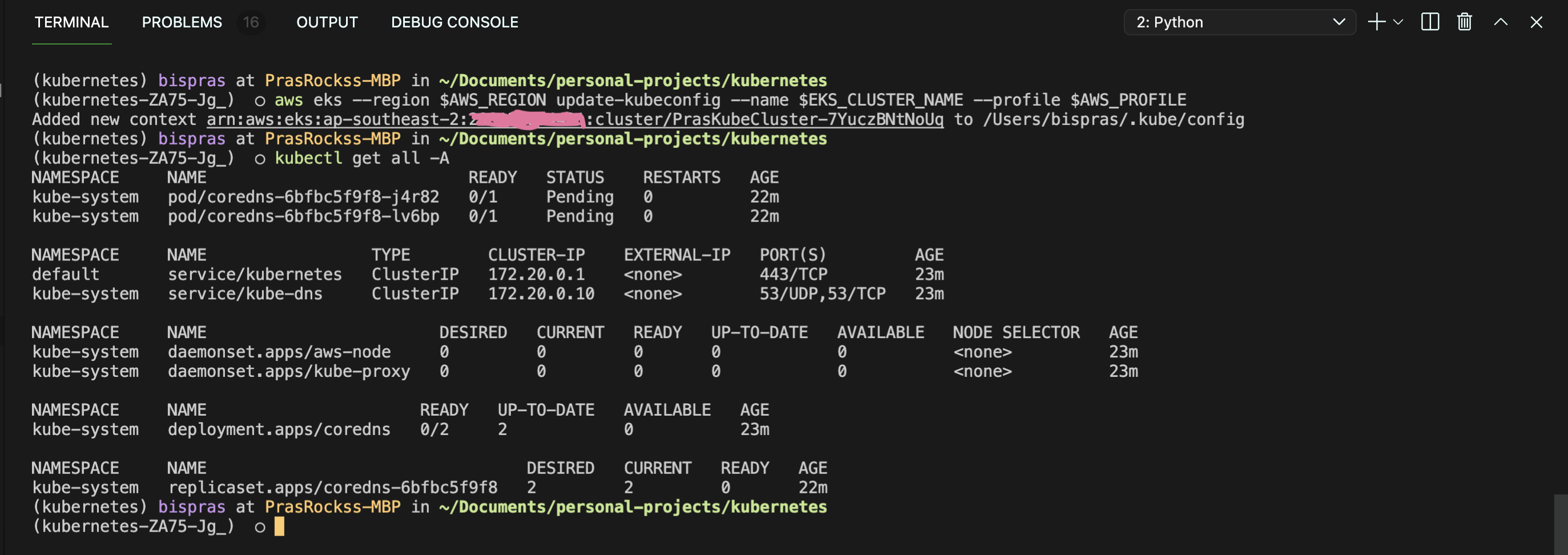

Now that we have a secure network link to the EKS API Server, lets configure kube config locally in our system to interact with the API Server. Run the following command with correct environment variables set to update kubeconfig.

aws eks --region $AWS_REGION update-kubeconfig --name $EKS_CLUSTER_NAME --profile $AWS_PROFILEThen run any kubectl command to verify that you are able to connect to the API Server.

This ends part 1 of the tutorial. We have successfully deployed a managed EKS Cluster on AWS using IaC (Infrastructure as Code), AWS Cloudformation in this scenario and we were able to get around the limitations of Cloudformation by leveraging a python script to restrict network access. We then updated kubeconfig and verified that we can interact with the API Server.

In the 2nd part of this tutorial, we will deploy managed Node Groups on the EKS Cluster we have deployed. I hope you enjoyed reading this short article, thank you 😃

0 Comments